A friendly tour · v0.1

Spiking neural

networks are

convex optimizers.

A simple population of leaky integrate-and-fire neurons, with the right connectivity, solves a quadratic program. Each spike is a constraint becoming active. Each silence is a gradient step. The page below walks through the idea, the math, and a runnable Python implementation.

- Install

pip install snn-opt- Backends

- Python · C++

- License

- Apache-2.0

A 2-D quadratic with three half-space constraints. The trajectory drifts toward x*ᵤ, spikes at each active wall, and settles at the constrained optimum.

Three beats

The idea, distilled.

The whole framework collapses into three repeating moves. Everything else — the convergence proofs, the hardware story, the spike raster — falls out of these.

Drift.

Between spikes, each neuron's membrane voltage moves by the gradient of an objective. Plain gradient descent — dressed up as biology.

Spike.

When the voltage would push the trajectory past a constraint, a spike fires. The spike re-projects the state onto the feasible boundary — a discrete correction.

Settle.

The spike train and the drift balance at the constrained optimum. Active spikes encode the active constraint set. The math is exactly that of a primal-dual QP solver.

A picture

What it

looks like.

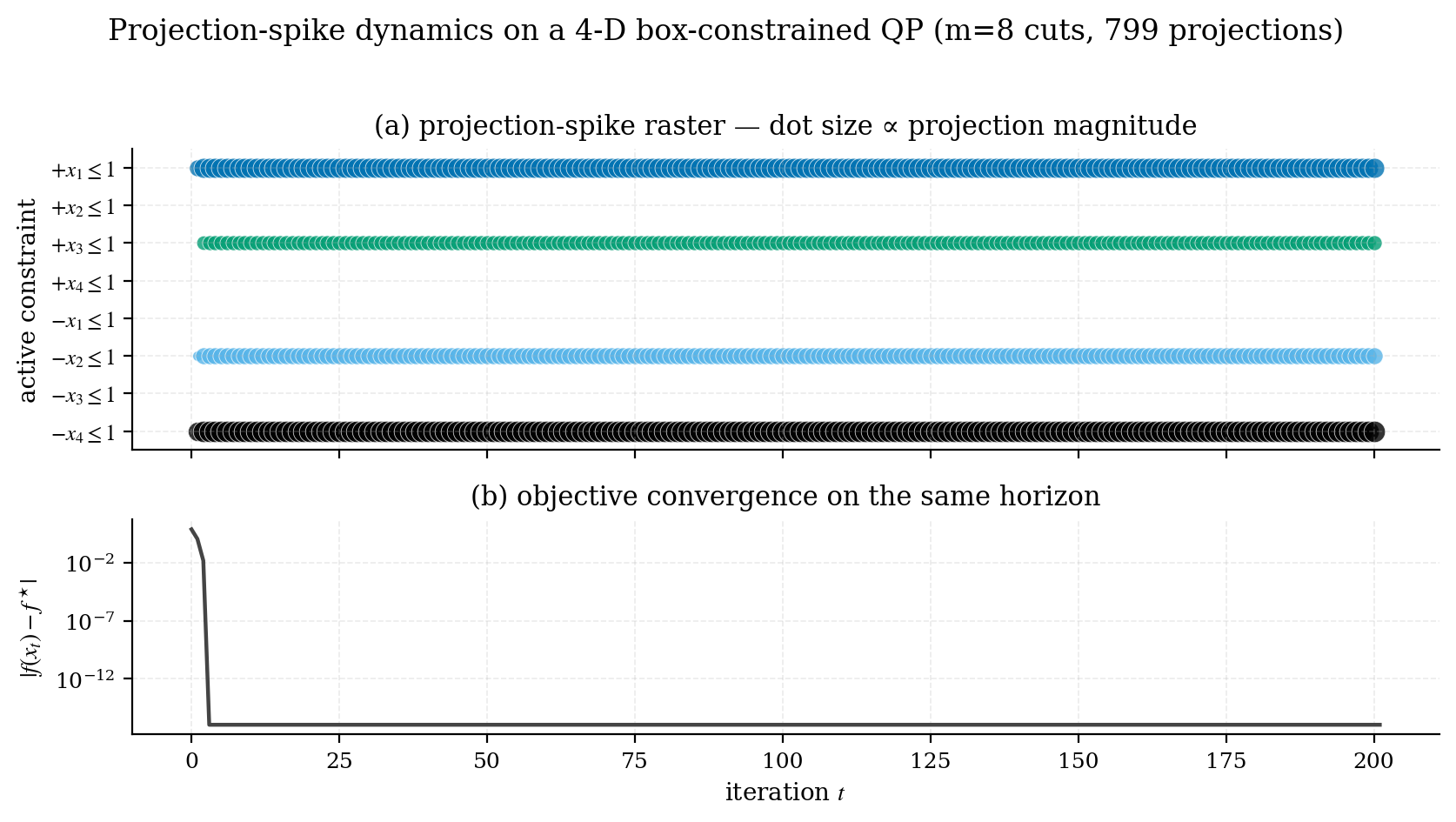

A 4-D quadratic, optimum outside a box constraint. The trajectory bounces against the four "active" faces every iteration; each dot is one projection event. Below it: the objective gap collapsing on the same iteration axis.

benchmarks/02_spike_raster.py.

Map

What you'll

find here.

Four entry points. Pick whichever matches your appetite — the intuition essay, the hands-on tutorials, the published papers, or the source code itself.

- Intro essay · 8 min

The spiking idea explained from scratch, with no prior neuromorphic background required.

- Tutorials hands-on

Walkthroughs: formulating SVMs, ridge regression, PCA and a few control problems as QPs you can drop into the solver.

- Papers peer-reviewed

The published work that uses this framework, with abstracts and DOI links.

- snn_opt repo source · Apache-2.0

Canonical Python implementation: solver, compiled C++ backend (HLS-compatible for FPGA deployment), examples, benchmarks, full mathematical writeup.

Install

Two lines

and you're in.

Prebuilt wheels for Linux (x86_64, aarch64), macOS (Apple Silicon), and Windows across CPython 3.9–3.13. The compiled C++ backend ships inside the wheel; no toolchain needed on your side.

pip install snn-opt

Then head to the Quickstart for a

five-minute walkthrough. The PyPI distribution name is

snn-opt (hyphenated, per PEP 503); the Python import

name is snn_opt. Pick the C++ kernel with

backend='c' for a roughly 10× speedup on the inner

projection loop — the same kernel source is HLS-compatible and

is the basis for the planned FPGA deployment.

For whom

Who this is for.

Students starting a thesis on neuromorphic methods. Researchers from optimization or control who heard "spiking networks" and weren't sure what to make of it. Anyone who finds beauty in connections between fields that look unrelated until they don't.

If you want the formal version: head to the

snn_opt repository

— it has the full theory document, an academic-style README, and

a benchmark suite. The pages here are the

friendly version of the same material.

Acknowledgments

Acknowledgments.

Developed at the School of Artificial Intelligence, Taizhou University.

This codebase implements the SNN-QP research program led by Prof. Shuai Li (IEEE Fellow; Faculty of Information Technology and Electrical Engineering, University of Oulu, Finland), whose work on neurodynamic optimization originated this line of inquiry. The mathematical framework follows Mancoo, Boerlin and Machens (NeurIPS 2020) and the broader projection-neural-network lineage (Hopfield–Tank, Kennedy–Chua, Xia–Wang, Liu–Wang).