The picture

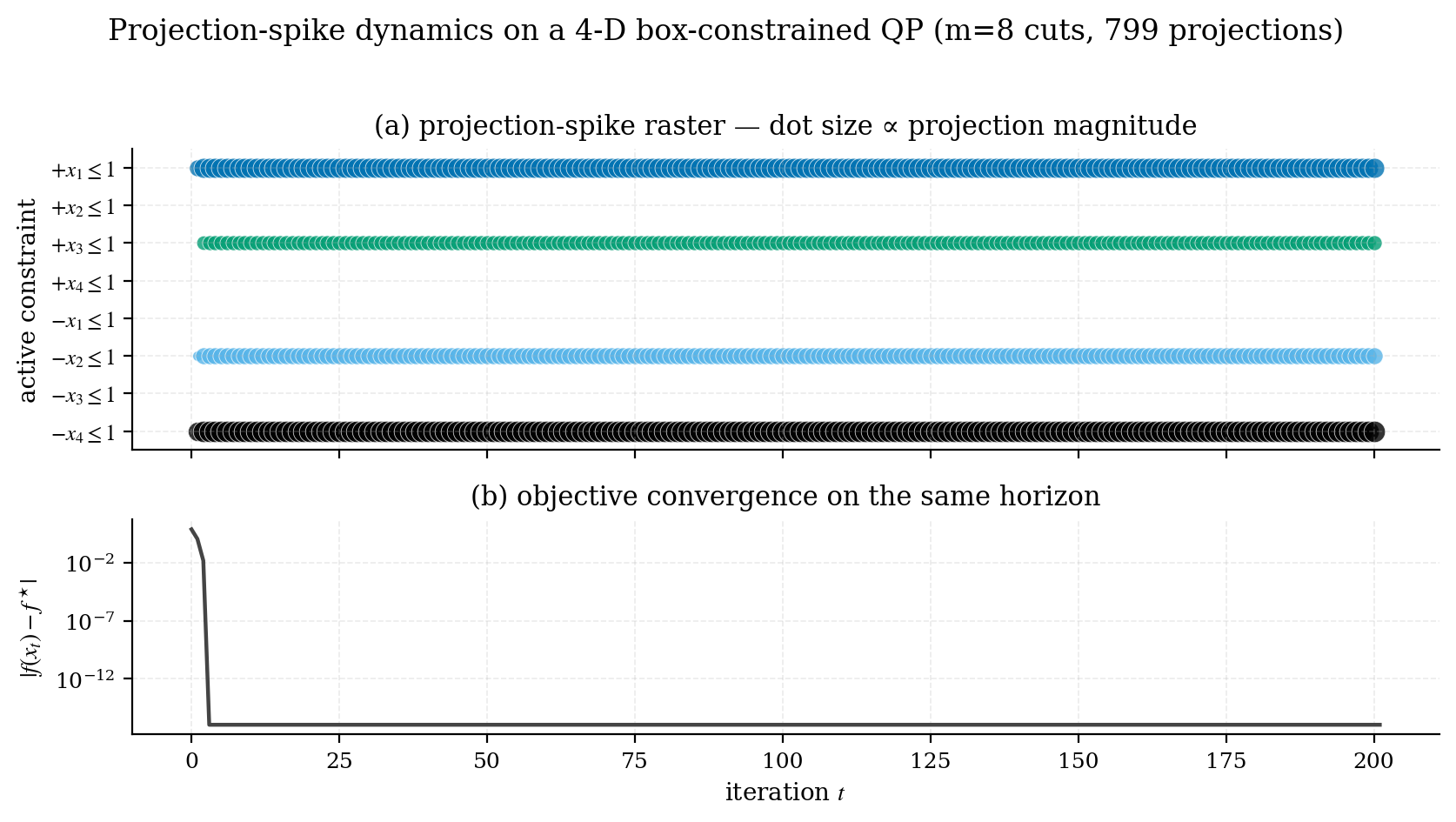

benchmarks/02_spike_raster.py on a 4-D box-constrained QP.Each row is one inequality constraint . Each dot at column on row is a projection event: at iteration , constraint became active and the solver applied a spike to push back onto the feasible side. The dot’s size is proportional to the spike’s displacement norm — bigger dot, bigger correction.

The bottom panel is the objective gap on the same iteration axis, so you can see which spikes happen at the high-energy phase versus the low-energy tail.

What you read off it

A few patterns to recognise:

Healthy convergence

A burst of large spikes early on across several constraints, decaying to a sparse train of small spikes on a stable subset. This is what the example above shows: the solver finds the active facets, projects the trajectory onto them, then settles.

A non-convex or unbounded problem

Spikes that never settle down — the dot sizes don’t decrease, the active set keeps changing. Either the problem isn’t a QP (you’ve fed in a non-PSD Hessian), or your initialisation is sending the trajectory through a long sequence of facets.

A redundant constraint

A row that never fires. The constraint is in your formulation but is implied by other constraints. Worth removing to simplify the problem; sometimes it’s a bug (you intended that constraint to bind).

A poorly conditioned constraint

A row that fires every iteration with growing spike sizes. The auto step size is too aggressive for this constraint’s geometry. Drop k0_scale (default 0.5, try 0.2).

Reproducing it on your own problem

SolverResult exposes the raw arrays:

result.spike_times # iteration of each spike shape (S,)

result.spike_constraints # list of arrays of indices, one per spike

result.spike_norms # ‖Δx‖_2 for each spike shape (S,)

result.spike_deltas # the Δx itself shape (S, n)

result.spike_violation_values # the violation that triggered itA minimal raster plot:

import matplotlib.pyplot as plt

import numpy as np

fig, ax = plt.subplots(figsize=(8, 3))

sizes = 6 + 70 * (result.spike_norms / result.spike_norms.max())

for k, (t, active) in enumerate(zip(result.spike_times, result.spike_constraints)):

for j in active:

ax.scatter([t], [j], s=[sizes[k]], color=f"C{j % 10}", alpha=0.8)

ax.set_xlabel("iteration")

ax.set_ylabel("constraint")

ax.set_yticks(np.arange(C.shape[0]))

ax.invert_yaxis()

plt.show()The benchmark script benchmarks/02_spike_raster.py is the polished version, with an objective-gap subplot underneath and a consistent academic style.

Why this matters

Classical QP solvers give you the active set at the optimum. The spike raster gives you the active set as a time series — which constraints fought for influence and when, which ones eventually won. This is the kind of insight that, in classical optimisation, lives only in textbooks. Here, it’s a built-in side effect of how the solver works.

Next

- Try running

example2_3d_polytope.pyand inspecting the resulting raster — a 3-D polytope with four constraints is the smallest interesting case. - Compare a healthy raster to one from a deliberately ill-conditioned problem (e.g. set

k0_scale=2.0and rerun a benchmark). See for yourself what “growing-amplitude spikes” looks like.